This subclass of machines is practically equivalent to the single-processor vectorprocessors, although other interesting machines in this subclass have existed (viz. VLIW machines [32]). In the block diagram in Figure 1 we depict a generic model of a vector architecture.

Figure 1:

Block diagram of a vector processor.

The single-processor vector machine will have only one of the vectorprocessors depicted and the system may even have its scalar floating-point capability shared with the vector processor (as was the case in some Cray systems). It may be noted that the VPU does not show a cache. The majority of vectorprocessors do not employ a cache anymore. In many cases the vector unit cannot take advantage of it and execution speed may even be unfavourably affected because of frequent cache overflow. Later on, however, this tendency was reversed because of the increasing gap in speed between the memory and the processors: the Cray X2 had a cache and NEC's SX-9 vector system has a facility that is somewhat like a cache.

Although vectorprocessors have existed that loaded their operands directly from memory and stored the results again immediately in memory (CDC Cyber 205, ETA-10), all present-day vectorprocessors use vector registers. This usually does not impair the speed of operations while providing much more flexibility in gathering operands and manipulation with intermediate results.

Because of the generic nature of Figure 1 no details of the interconnection between the VPU and the memory are shown. Still, these details are very important for the effective speed of a vector operation: when the bandwidth between memory and the VPU is too small it is not possible to take full advantage of the VPU because it has to wait for operands and/or has to wait before it can store results. When the ratio of arithmetic to load/store operations is not high enough to compensate for such situations, severe performance losses may be incurred.

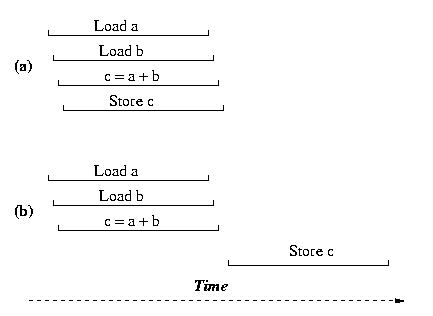

The influence of the number of load/store paths for the dyadic vector operation c = a + b (a, b, and c vectors) is depicted in 2.

Figure 2: Schematic diagram of a vector addition. Case (a) when two load- and one store pipe are available; case (b) when two load/store pipes are available.

Because of the high costs of implementing these datapaths between memory and the VPU, often compromises were sought and the number of systems that had the full required bandwidth (i.e., two load operations and one store operation at the same time) was limited. Only Cray Inc. in its former Y-MP, C-series, and T-series employed this very high bandwidth. Vendors rather rely on additional caches and other tricks to hide the lack of bandwidth.

The VPUs are shown as a single block in Figure 1. Yet, there is a considerable diversity in the structure of VPUs. Every VPU consists of a number of vector functional units, or "pipes" that fulfill one or several functions in the VPU. Every VPU will have pipes that are designated to perform memory access functions, thus assuring the timely delivery of operands to the arithmetic pipes and of storing the results in memory again. Usually there will be several arithmetic functional units for integer/logical arithmetic, for floating-point addition, for multiplication and sometimes a combination of both, a so-called compound operation. Division is performed by an iterative procedure, table look-up, or a combination of both using the add and multiply pipe. In addition, there will almost always be a mask pipe to enable operation on a selected subset of elements in a vector of operands. Lastly, such sets of vector pipes can be replicated within one VPU (2- up to 16-fold replication are occurs). Ideally, this will increase the performance per VPU by the same factor provided the bandwidth to memory is adequate.

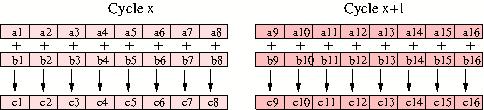

Lastly, it must be remarked that vectorprocessors as described here are not considered a viable economic option anymore and only NEC still produces such stand-alone vectorprocessing systems. Instead, in a small way, vectorprocessing is now integrated into the common CPU cores. Like in vectorprocessors the principal idea of vector processing is that the same operation is being performed on multiple elements of arrays at the same time. For instance, when one wants to add the elements of two arrays c = a + b several elements of these arrays can be handled at the same time. A vector instruction causes that the elements are loaded into the vector registers and are all added simultaneously. This has not only the effect of speeding up the computation it also leads to less instructions to be decoded as one instruction generates multiple results. Depending on the type of CPU 2–8 elemental results can be generated per clock cycle (a clock cycle being the fundamental time unit within the CPU). In Figure 1 the addition of 8 elements of arrays a and b is depicted.

Figure 3: Diagram of a vector addition.

Note that there is a difference in the processing of the vector elements in vectorprocessors and within the vector units of the common CPU cores. While the former produces a result every clock cycle, the latter yields several results in a single clock cycle.

Various additional units had to be added to CPUs to accomodate vector processing capabilities and corresponding vector instructions had to be added to the compilers to make use of them. Originally these vector units and instructions were meant to speed up graphical processes for multimedia applications but it was recognised soon that it could benefit computational work. For the x86 line of processors the vector units has for instance been the SSE2, SSE3, and SSE4 units. In the most recent Intel and AMD CPUs AVX units provide 8 32-bit precision floating-point results or 4 64-bit precision results, while in the IBM BlueGene/Q (see the BlueGene systems) the AltiVec unit can produce 4 64-bit results simultaneously.

Presently, the vector instructions supported by the compilers are not yet as powerful as those of the late stand-alone vectorprocessors used to be. For instance, chaining vector operations, i.e., feeding the result from one vector operation directly to another without the need to store the intermediate result is still limited. The capabilities of the vector units are, however, constantly improving and one can expect them to become on par with the vectorising compilers for the stand-alone systems from the former Cray and Fujitsu systems (see the Disappeared machines page) and the NEC compiler within a few years.

In a fair amount of cases the compiler can detect whether a part of a program can be vectorised. But in many cases the compiler has not enough information to decide whether it is safe to vectorise a particular part of the code. When it is known that this is the case one can give a hint to the compiler that it is safe to vectorise the code by means of so-called directives for Fortran and, equivalently, pragmas for C an C++ codes. Also libraries may hold vectorised versions of intrinsic functions or (numerical) algorithms. The vectorisation technique only works within a shared-memory environment as the compiler only can address memory that is in the local memory space.